Projects

UCI - Zotbotics

Sep. 2025 - Present

Software Subteam

For this project, my team and I are developing a TARS robot inspired by movie 'Interstellar'.

We are divided into three main sub-teams-Mechanical, Electrical, and Software-and I am part of the Software sub-team.

During the first quarter, I mainly focused on integrating an AI ChatBot and prototyping a light-weight mini dashboard to visualize system status and telemetry.

I used three API keys for STT, text response, and TTS to fully integrate the AI ChatBot pipeline.

In the next two quarters, our software team will be focusing on integrating motor control, real-time telemetry, and autonomous behavior,

while collaborating closely with the electrical and mechanical teams to ensure seamless system integration.

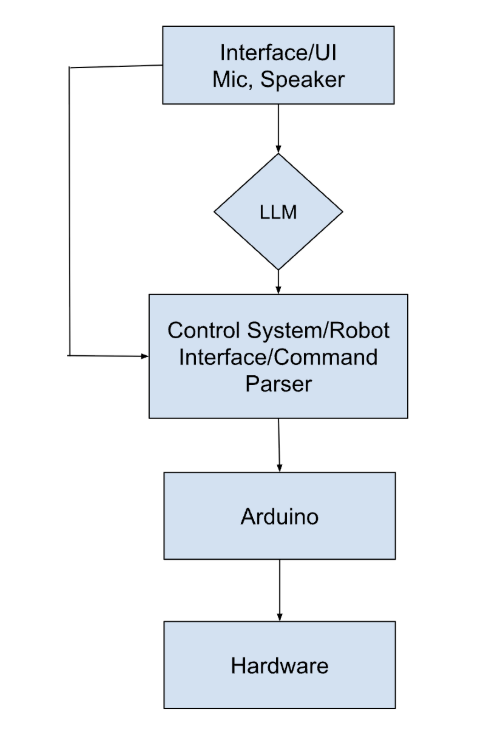

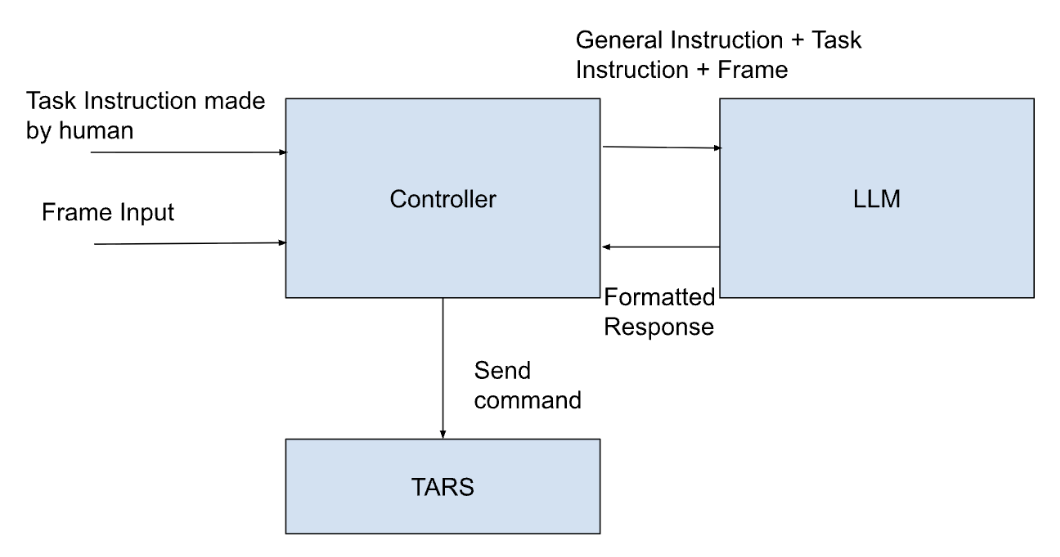

Below is the hierarchical flow diagram of the system and theoretical architecture design of zero-shot vision-language model that will be experimented on TARS.

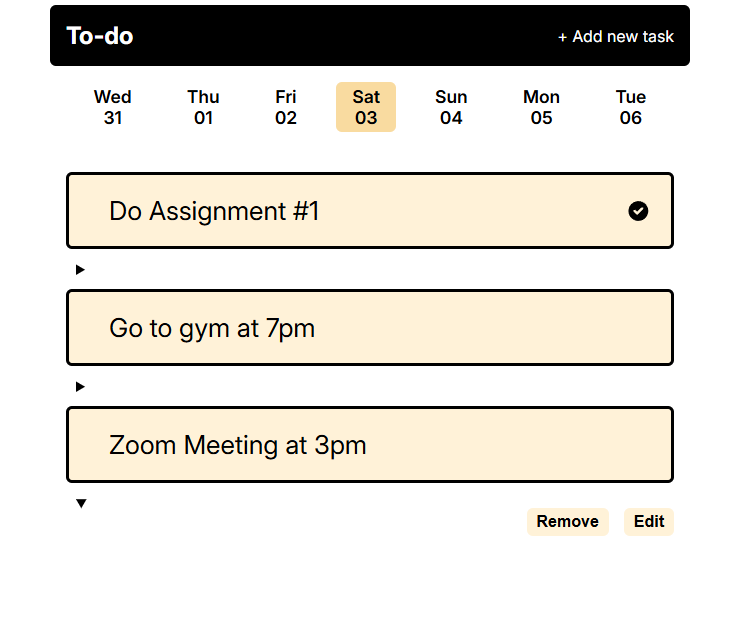

This is my first full-stack web development project. I implemented a Flask-based to-do list web application backed by MongoDB. I designed and implemented full CRUD functionality for task management using server-side rendering with Jinja2. Each task can be marked as complete or incomplete which will be saved in the datebase. I used MongoDB to persist task data, enabling efficient creation, updates, and deletion of tasks. This project helped me strengthen my understanding of backend routing, templating, and database integration. I'm planning to add Flask-based app user authentication and user-specific task management for future improvements.

Drone

Sep. 2025 - Dec. 2025

As a part of a quarter-long project (EECS 195: Drone), I primarily focused on hardware assembly and soldering.

Below is a timeline of key milestone tasks I completed:

- Soldered all 0.1" headers on the flight controller for reliable electrical connections

- Soldered battery leads, ensuring secure joints and proper polarity

- Soldered ESCs signal wires to all four motors, ensuring proper motor rotation direction (CW/CCW)

- Built a drone frame and mounted those aforementioned components along with a radio receiver on it

- Mounted GPS module on top and tested it on map in Mission Planner

- Mounted a camera with HUD on the front and connected it to FPV monitor

- Implemented ESP32-based WiFi telemetry using Dronebridge for ground communication

- Tested a maiden flight following a full pre-flight safety checklist

- Configured waypoint missions and geofence with Mission Planner on ArduPilot

- Executed a simple autonomous waypoint flight

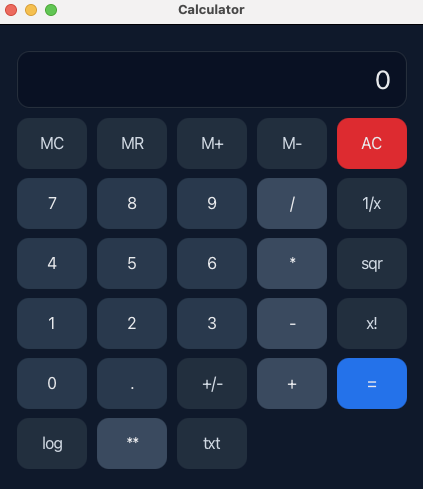

I created this Javafx GUI calculator supporting both basic arithmetic and advanced mathematical operations like exponentiation, logarithmic functions, and factorial constraints.

I applied object-oriented principles to separate UI, logic, and testing layers, as well as customized the interface using CSS for a better visual.

I made a simple command-line tool in C to analyze and filter out log files. It is designed to help more easily inspect and interpret application logs.

I created a function that parses a common timestamp-level message format, offers features like filtering log entries by level (INFO, WARNING, ERROR), and sorts entries by time.

It produces a summary count of each log level and I plan to extend its funtionalities by including additional output formats or log structures.

By transforming raw text logs into structured and filtered output, it facilitates faster debugging and clearer understanding of runtime behavior.

I gained hands-on experience in Linux operations, basic networking, cryptography, and steganography through self-directed learning on TryHackMe platform.

In the spirit of promoting an ethical hacker culture, I created and designed a beginner-friendly Website using Google Sites to provide users with

helpful and informative insights by simplifying complex cybersecurity topics into accessible and actionable contents.

MailPilot

Oct. 2025 - Present

Will be updated.

Research

Undergraduate Research Assistant

I am currently involved in Professor Mohmmad Al Faraque's Research Lab.

During my first quarter, I was tasked with exploring capabilities of the OpenUAV Vision-Language Action(VLA) model and conducting training and performance evaluations.

To analyze datasets, I have built several Python scripts on top of this platform, including formatting results for each scene,

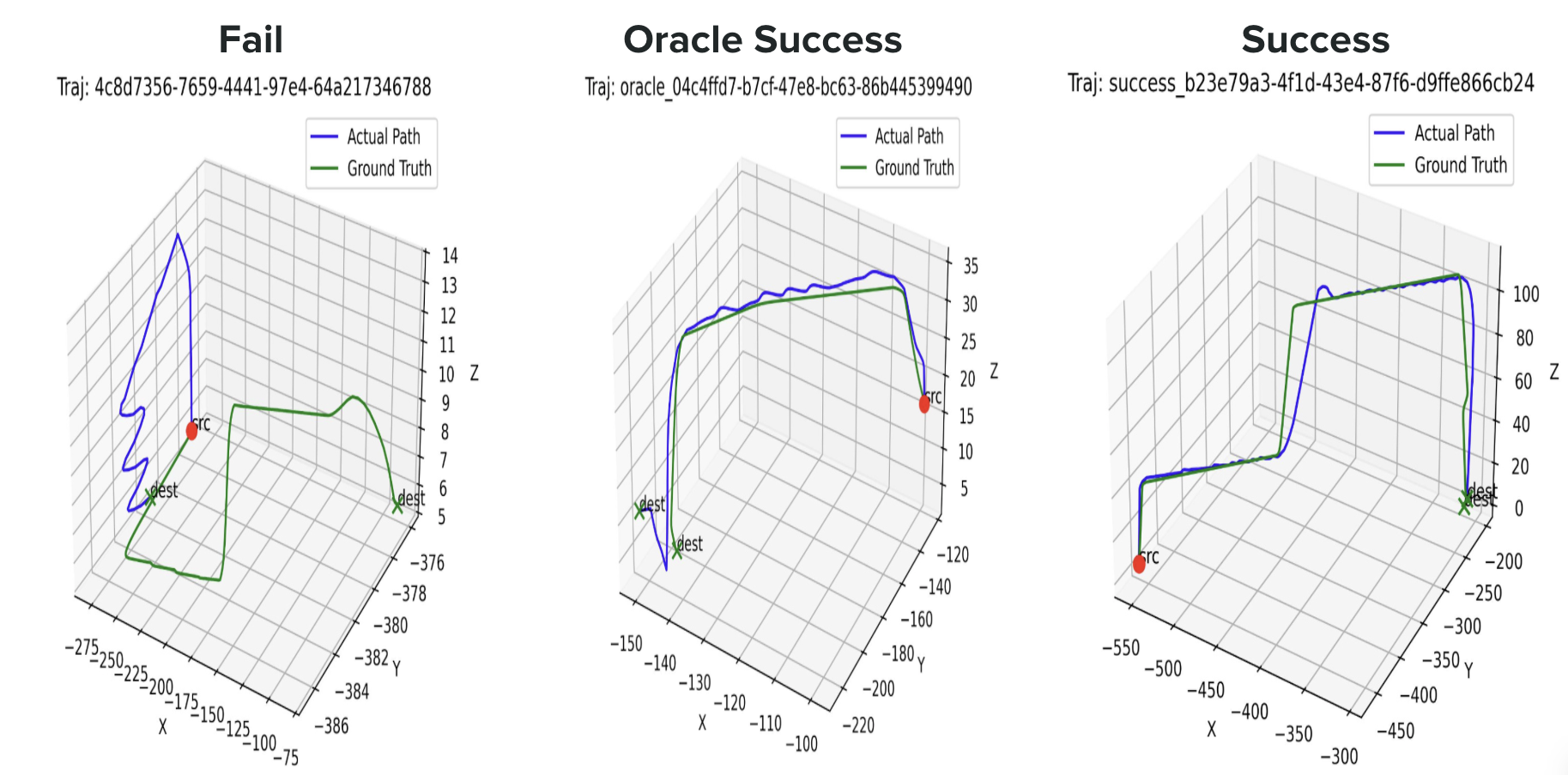

creating a video out of sequential frames using OpenCV, and visualizing 3D plot trajectories for a comparison between model-predicted and ground truth trajectories.

In addition, I am currently developing a zero-shot LLM-based software controller deployed on a physical drone (DJI Tello). This work aims to evaluate

whether the proposed approach can operate reliably in real-world, practical environments beyond simulation, and to better understand the challenges of

transferring VLA models to physical robotic systems.

Below is an example of 3d plots and video.

Experience

I am engaged in IEEE OPS Program, where I build eight hands-on projects starting from beginner to advanced levels and a custom ESP32-based rover capstone.

Here is the list of projects I have done so far. (More to be updated up until June 2026)

My first OPS project was to build a simple LED circuit using 9V battery. I first constructed the circuit on a breadboard, then moved on to soldering it onto a perfboard. A simple Ohm's Law calculation was required to determine the appropriate resistor value.

In this project, I built a three-key electronic piano using a 555 Timer IC. I configured the circuit in astable mode to generate oscillating signals, which were then fed to a passive piezo buzzer, along with a capacitor to filter out low frequencies to produce clean sound. Once tested successfully on a breadboard, I soldered it onto a custom-designed PCB.

For this project, I programmed an ESP32-C3Fx4 to control a dimmable light using three potentiometers. I developed Arduino code that reads potentiometer input and uses PWM signals to dynamically control the multi-color output of a RGB LED.

Continuing with the ESP32, I implemented an embedded control system in which servo motion is adjusted based on distance measurements from an ultrasonic sensor. The servo angle switches between 0° and 90° to open/close the lid of the trashcan.